Start Exploring Keyword Ideas

Use Serpstat to find the best keywords for your website

How To Stop Paying For Trash In The Backlink Index?

And the results truly amazed us! (We honestly tried to avoid clickbait here, but there's no way around it). Long story short, the backlink index volume is not the crucial point.

The bigger the database — the lower the quality. Large backlink databases often suffer from outdated data and duplicates. But wait, there's more! We've gathered a set of backlink insights you just can't afford to miss!

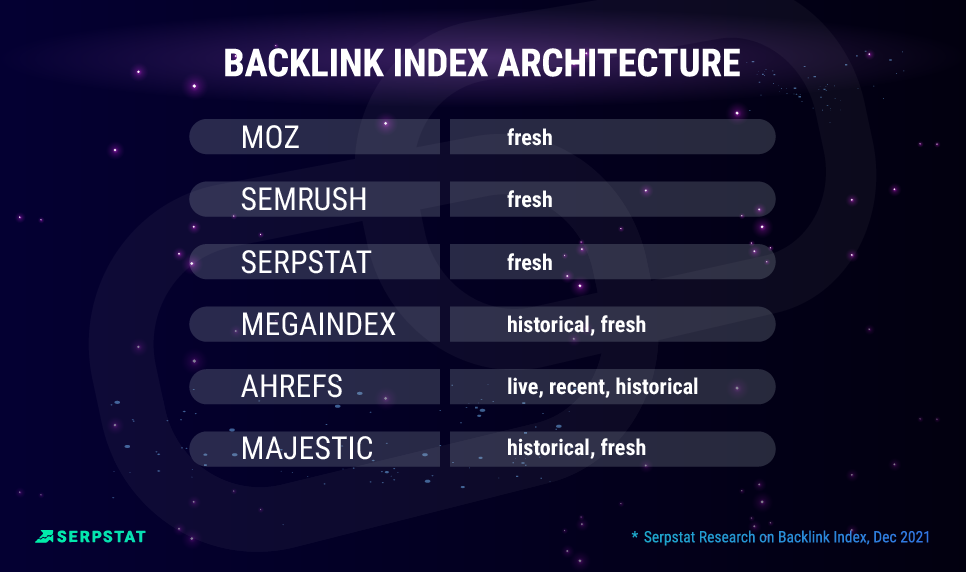

How Are Link Indexes Different On Each Platform?

Ahrefs' backlink index is built with the following subindexes:

- Live — links that were found during the last crawl session and remain active until the subsequent crawling;

- Recent — the subindex contains data on active, as well as lost links that were listed as "live" for the last 3-4 months. This list may include the links that were marked lost due to technical reasons.

- Historical — all the links of the domain that have been assigned the "live" status, starting from 2016 (since the launch of the Ahrefs index). At the moment, these links might have lost relevance.

MOZ displays "fresh" data from the last crawl. Although the data is not regularly updated for all links, higher-quality pages are the first priority. A machine-learning algorithm determines the quality.

Majestic's Index consists of the following parts:

- "Fresh index" is all links considered active during the last crawl. The update rate is from 90 to 120 days.

- "Historical index" - in addition to fresh-index data, contains all links that have ever been detected within the analyzed domain.

Among the main disadvantages of such programs is their slow operation compared to cloud services. In addition, there is a lack of 'cross-platform compatibility' and the need for initial software installation and updates for additional features. Speaking of advantages, such programs allow more convenient work with filters and sorting; all data is gradually downloaded from the database, and you can work with it locally.

- What are Live, Recent and Historical links?

- How are Backlinks Updated?

- How We Index The Web

- Majestic launch a Bigger Fresh Index

Backlink Index Comparison: Methodology

- Backlink index volume;

- Data refresh rate;

- Link accuracy;

- Match rate (The uniqueness of backlinks).

#expert_perspective

Secondly, I need to see the links that are relevant to the page. Seeing thousands of auto-generated "top 500 web pages"-links from all those well-known directory pages does not help if I miss recently published links in topic-related forums or user reviews.

- Link placement. You have to make sure it's not located in a directory with purchased links only (better to check manually);

- DR (using Ahrefs API);

- The quantity of referring domains and pages (using Serpstat API);

- The total amount of outbound domains and pages (using Serpstat API);

- The number of keywords for which the site is ranked. (using Serpstat API);

- Dynamic in the number of keywords in the last six months. (using Serpstat API);

- Thematic (better to check manually).

Knowing how many links to my domain a referring domain is providing is also useful so I can see at an instant whether the link is being spammed or perhaps located sitewide. I think it's also beneficial to show the overall number of referring domains and backlinks my chosen domain has as a quick overview that can be monitored easily for any sudden spikes or drops.

Though not essential, the average monthly traffic of a referring domain is a useful indicator to see how popular a website is without having to research it further using other toolsets, as would the statistics about the referring domain's own backlink profile.

When working on an "at scale" campaign, i.e. looking at domains "in bulk", I'm looking first for DR, to some extent the UR, DomPop, TF and CF.

When I go for the more targeted approach, I'm adding to this more granular criteria (rather qualitative), such as the trend of the visibility index of domains, language, follow/nofollow, or directly looking at the website: freshness of content, where the backlink could go, and other factors.

If I'm looking at backlink index for monitoring and reporting, I tend to keep things simple: DR, DomPop, TF and CF. The more data point we bring, the more complex it gets, and the more difficult it is to report it to non-SEO.

I usually focus on the following parameters:

- The traffic on the source page;

- How many keywords from regional TOP-100 are listed on a page;

- The type of link (not just Dofollow / Nofollow - Sponsored, UGC, link in comments, footer (site-wide link)). If you think about it, you can come up with many more types — link-picture, anchor, non-anchor, etc;

- Page type (based on micro-markup or domain). For instance: Governmental, Educational, Catalog, Forum, Review, Blog;

- Number of other outbound links on this page;

- Date the page was published and the date of the first discovery;

- Domain's topic;

- Links' title;

- Domain's title (useful for filtering out unnecessary domains);

- Length of content on a given page;

- Is this domain on the database of link exchange services? It would be handy to catch such;

- DR and UR;

- number of referring domains, backlinks, acceptors;

- follow / nofollow proportion;

- percentage of anchor / non-anchor links, as well as brand anchors;

- availability (.gov, .edu domains);

- the volume of traffic and keywords, etc.

We also check sites' top-performing keywords to confirm the site has pay-worthy keywords relevant to our client niche. DR metrics must be stable and have fewer outbound links, compared to inbound links volume.

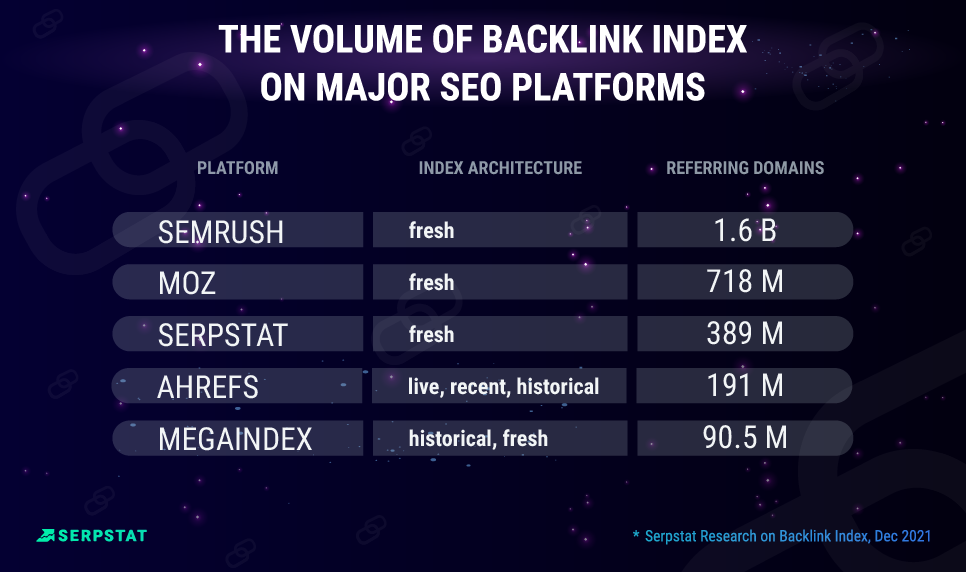

Large Database: Advantage Or Disadvantage?

- whether robots.txt, meta robots and x-robots-tags, noindex/nofollow tags are taken into account;

- the availability of website during several simultaneous crawling sessions,

- individual blocking of platforms through the above methods or by HTTP headers, by type crawler calls to the page (GET, POST, HEAD), by an identifier (User-Agent) or on IP level.

Take advantage of the Recrawling URL Tool. Submit a list of pages with new backlinks, and Serpstatbot will collect data within 72 hours.

#expert_perspective

It would be a perfect feature to filter only referring pages in the Google index.

No database gives 100% effect, and therefore the relevance of the data and the size are significant. So, some platforms can boast about many links and domains, but these domains might be duplicates.

Inside the backlink index tool, you need a feature for searching for these duplicate links, filtering out junk links from site analysis reports, and other things (in Serpstat, this is implemented automatically). Otherwise, you have to analyze massive data tables, where you have to find valuable donors. Often, you end up with 100,000 lines, and there are less than three hundred actual exciting links.

What can be super frustrating are duplicated links, as they create "noise". I like tools that try to list the "original" link and flag copies automatically.

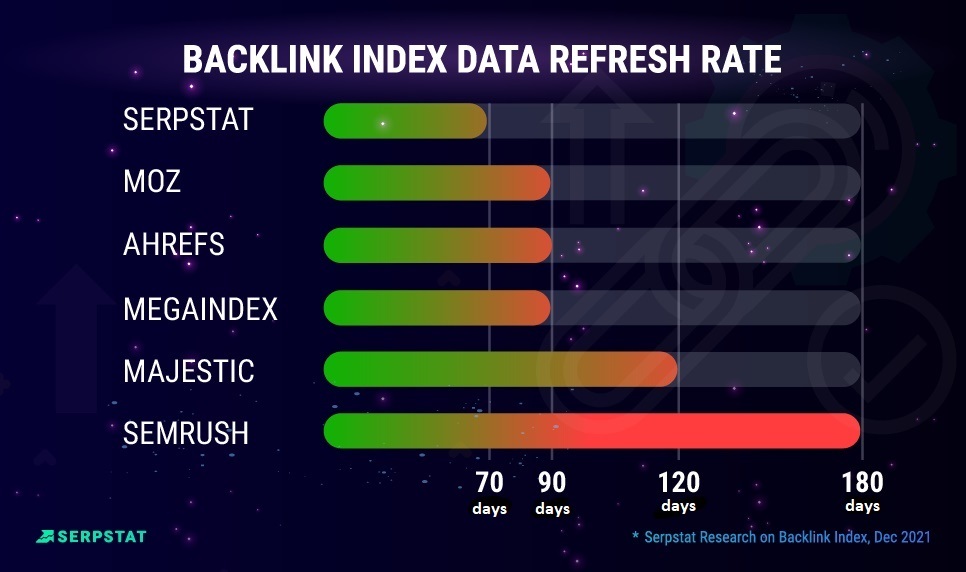

Data Refresh Rate

#expert_perspective

Nevertheless, I know that this is only possible to a limited extent, even with the greatest usage of link crawling resources. So a delay of a few days or weeks won't be that bad.

Some SEO tool providers offer features to import link data from Google Search Console, Bing Webmaster Tools. Webmaster etc. That's particularly useful to get even more backlink data during link-building campaigns.

Besides a "real-time" backlink index it's also useful to have a separate index that shows outdated, lost, or broken backlinks.

Getting new links right away is of course an ultimate goal but not really realistic or practicable.

Integration with a webmaster is super important - so that he quickly found backlinks in the index forcibly according to potential domains. So far, for today, this functionality is not detected, and we have to seek almost all requests in the search.

There is a direct correlation between the links and positions, but the devil is in the details. A clean reference profile in white SEO is more critical than many quantitative metrics - I discovered this in 2016-2017 and after that. We often make backlinks within the outreach method, and we do not always get reports that links were placed by mail. Therefore, it is necessary to search for them manually because the backlink index of the platforms comes with the delay.

Links Accuracy

Match Rate

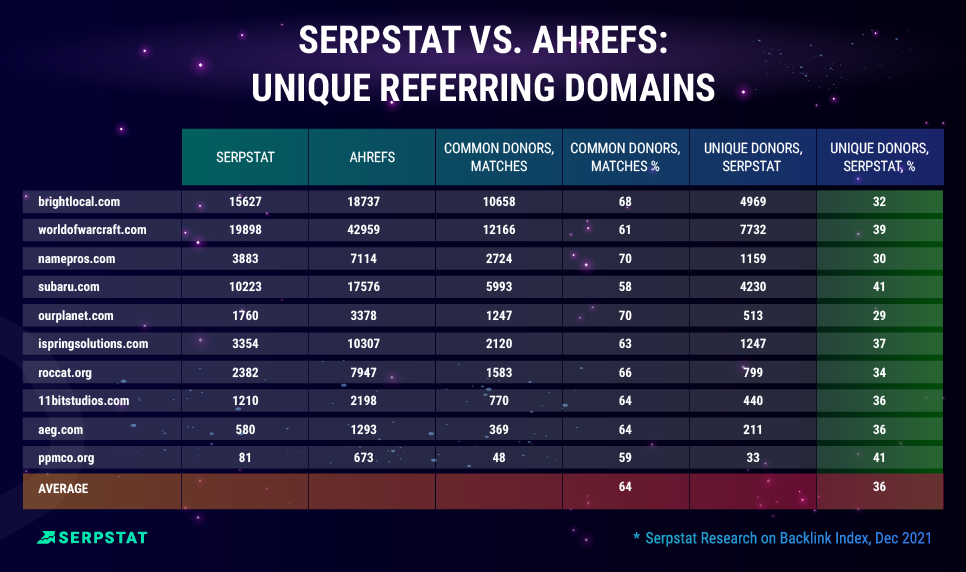

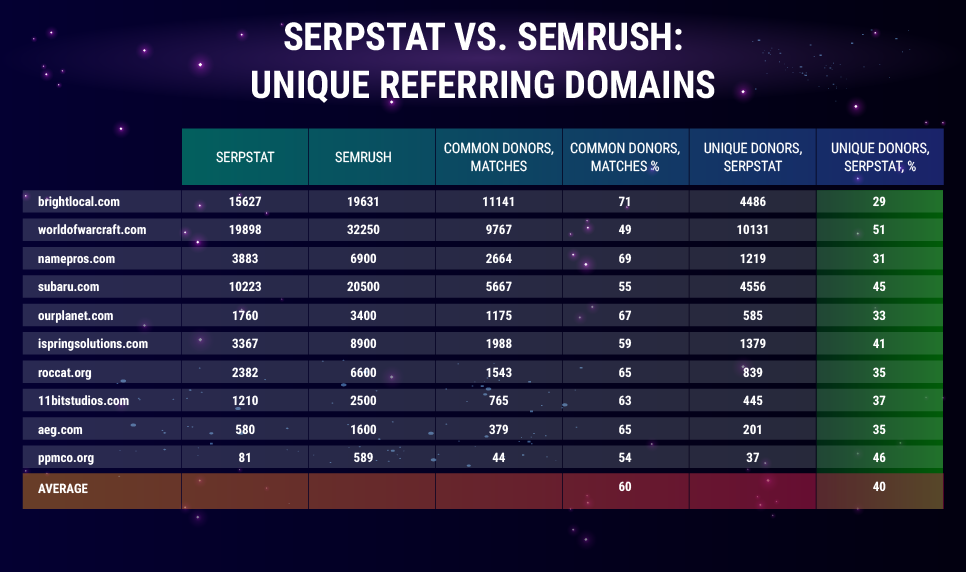

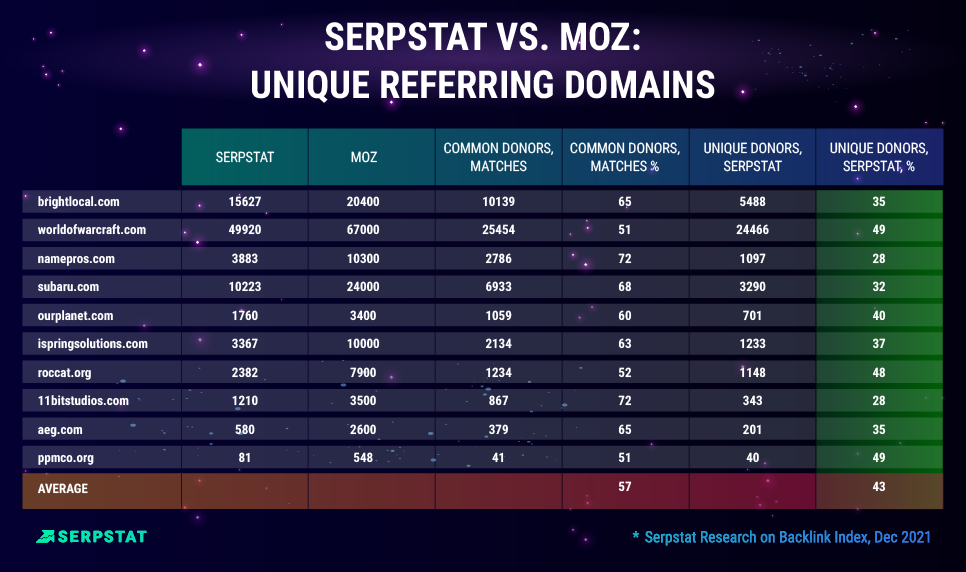

The share of unique referring domains is a parameter showing the percentage of donors in similar reports from competitors' platforms didn't detect.

For this analysis, we randomly selected ten domains in different niches: from gaming to YMYL, but we tried to diversify the selection in terms of geography, niches, and approximate traffic. List of domains we researched:

- brightlocal.com (USA) - online marketingworldofwarcraft.com (USA) - gaming

- namepros.com (USA) - web hosting

- subaru.com (USA) - the automotive industry

- ourplanet.com (USA) - environmental protection, government organizations

- ispringsolutions.com (USA) - IT, programming

- roccat.org (USA) - video games and consoles

- 11bitstudios.com (Poland) - game design

- aeg.com (Ukraine) - equipment

- ppmco.org (USA) - a government health organization.

- Referring donor by Serpstat;

- Referring donor by competitive service;

- Common donors to Serpstat and a competitor;

- Unique donor domains Serpstat.

#expert_perspective

But as already mentioned, quantity is not the only criterion to take into account. This data should always be taken with a grain of salt. Spoiler alert: the data still shows a lot of spammy links coming up.

As main points, I would say that you should always weigh your data and those of others, and try to look at quality rather than quantity, and also relevancy is key! So yes, checking your backlink profile in different tools is always a good idea.

Accuracy and the possibility to tweak the way those tools gather their link information are things I always have to struggle with. I do not look at index sizes but try to use databases that represent a data set that gives me the most accurate data.

I think Serpstat is the first I've seen to do that out of the different tools I've used over the years. Whilst this is helpful to cut out the noise, knowing about the spam sites is sometimes useful in case those domains are actually impacting your domain's health.

So far, no tool gives clear parameters needed for filtering out, so you have to sort the domains and check them separately.

Serpstat has a good link analysis tool, and its data is worth exploring.

Nikolay Shmichkov, SEO specialist at SEOquick

Serpstat's solution is pretty good so far in terms of convenience (it has an API function for analyzing backlinks and a URL rescan tool). When you work with massive data, it saves a lot of time.

Aleksey Biba, Head of SEO, privatbank.ua

Why Serpstat?

Since the link ranking factor is one of the leading in SEO, it is necessary to pay careful attention to the analysis of links and the quality of the analyzed data.

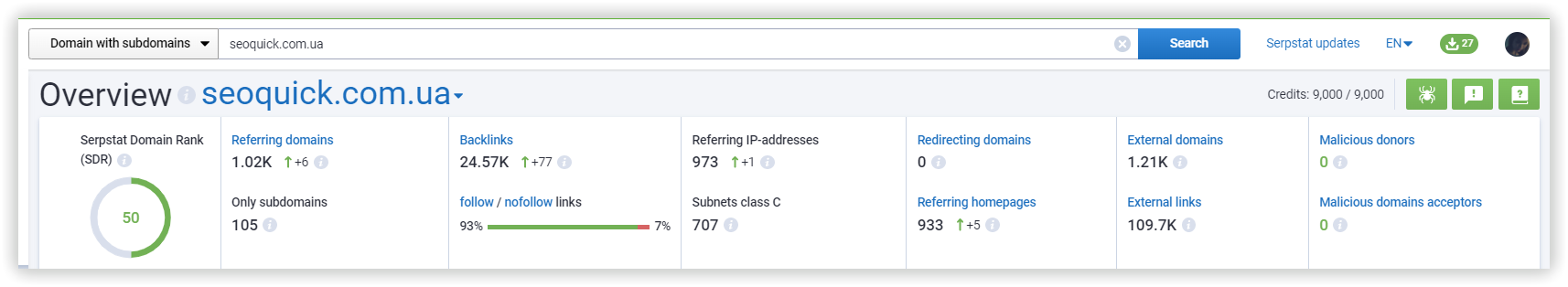

Serpstat focuses on the quality of the index data. And, in addition to the regular reports of link analysis, such as:

- Referring domains

- Backlinks

External links - External domains

- Link anchors

- Top pages (by link diversity)

The tool will be helpful if you purchased links and want to immediately track their impact on SDR to remove them if necessary and avoid negative consequences. Also, if your client requires up-to-date information "for today" and new links have not yet been displayed in the report.

For example, you want to find high-quality and relevant link placement websites. Previously, you would have to explore each competitor separately and manually search for common sites in the reports. Now it is enough to find several relevant competitors, enter their domains in the tool and automatically find those donors who link to all of them.

Conclusion

SEO specialists often take advantage of different platforms and combine data from several reports and indexes for a comprehensive analysis. One of these tools in your arsenal can be Serpstat, which, in addition to its backlink index, also has numerous unique reports for backlink analysis.

Well, we have shared various insights from the world of backlinks. Leave comments to discuss the article and ask additional questions about backlink diversity analysis in Serpstat :)

Speed up your search marketing growth with Serpstat!

Keyword and backlink opportunities, competitors' online strategy, daily rankings and SEO-related issues.

A pack of tools for reducing your time on SEO tasks.

Recommended posts

Cases, life hacks, researches, and useful articles

Don’t you have time to follow the news? No worries! Our editor will choose articles that will definitely help you with your work. Join our cozy community :)

By clicking the button, you agree to our privacy policy.